Understanding Agentic AI: Is It Mostly Business Logic Around LLMs?

As Agentic AI dominates industry headlines, a very valid question has emerged from developers and business leaders alike: Is "Agentic AI" mostly just classic business logic built around an LLM?

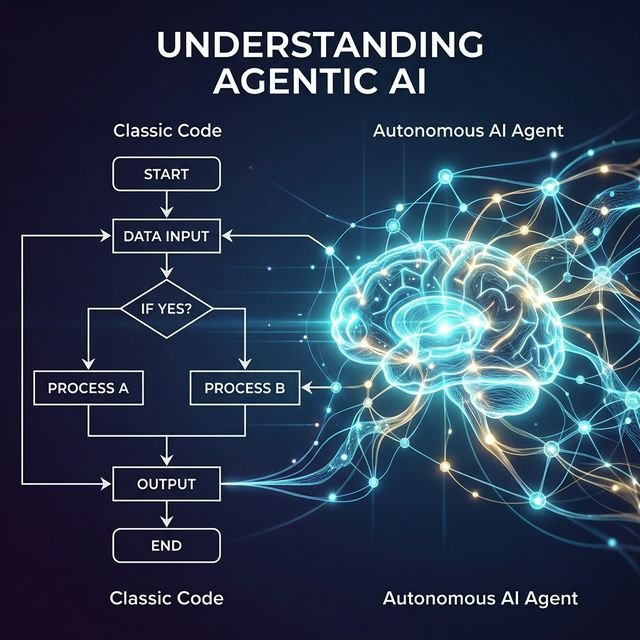

It’s easy to feel like the mystique is wearing off. When you look under the hood of many "agentic" apps, they seem to rely heavily on classic software engineering: selecting predefined actions, looping reasoning steps, chaining tasks together, and running basic if/else conditional flows. Some have even suggested that "Agentic AI" is merely a fancy marketing term for automated LLM workflows.

Are we oversimplifying it? Or is there something genuinely revolutionary happening? Using the Feynman Technique—prioritizing simple language over complex jargon—let’s break down what makes AI truly "agentic."

Level 1: The Skeptic’s View (The Fancy Flowchart)

To understand Agentic AI, we first have to understand why it sometimes looks like a plain old flowchart. If you build a system that takes a user's question, retrieves documents (RAG), feeds those into an LLM, and outputs an answer, you have built a powerful tool. But you have not built an agent.

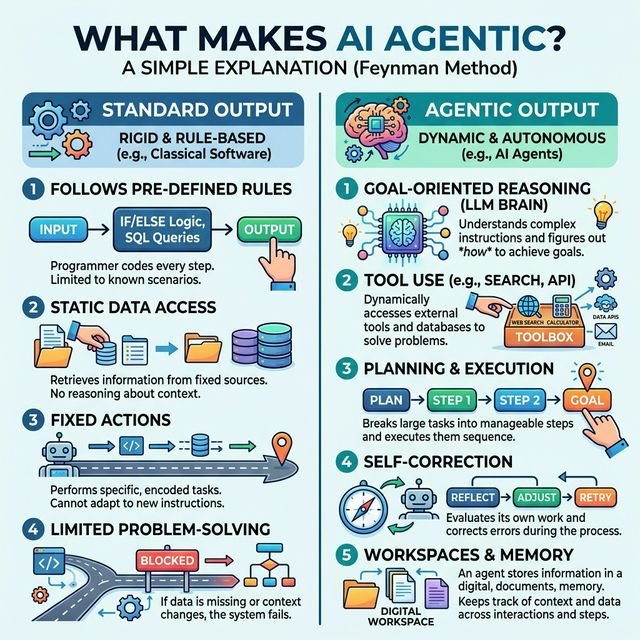

In classic software engineering, logic is "hard-coded." If condition A happens, execute function B. If the variables are known and meticulously tracked, standard programming works perfectly.

However, the magic of Agentic AI isn't in the flowchart; it's in the addition of a "brain" that can guess sufficiently well what those flowing variables should be when the path is entirely ambiguous.

Level 2: The Missing Ingredient (The Guessing Machine)

Let’s use a simple analogy. Imagine you own a restaurant. You have three massive databases: one containing hundreds of thousands of recipes, one containing all your recent stock invoices, and one tracking customer receipts.

You need to answer one question: "What should I put on the menu this week?"

Even a team of seasoned SQL engineers cannot write a simple query for that. Why? Because the variables are completely open-ended. What ingredients are fresh? What recipes match the chef’s skill level? Is there a holiday coming up?

A traditional script fails here. But an Agentic AI system acts as a reasoning engine. It breaks the open-ended task into sub-tasks. It orchestrates a "Stock Manager" sub-agent to review invoices, a "Chef" sub-agent to review recipes, and an "Event Planning" sub-agent to check the calendar. They make educated, probabilistic guesses, combine their findings, and return a robust menu. The logic is handled via "soft prompting" rather than "hard coding."

Level 3: The 5 Pillars of True Agency

So, if an agent isn’t just an LLM in a loop, what is it? A system becomes more and more "agentic" as it possesses the following five capabilities:

- 1. Commands (Effectful Actions): The ability to actually do things in the real world. Not just chatting, but sending an HTTP POST command to create a calendar event or delete a spam email.

- 2. Queries (Tool Use): The ability to extend its own knowledge by running web searches, executing code, or pinging external APIs to solve gaps in its training data.

- 3. Planning & Self-Correction: The ability to formulate a multi-step plan, attempt the first step, realize it failed, and autonomously generate a new plan without human intervention.

- 4. Local Workspaces: The ability to purposefully store, update, and evict information from its own memory "scratchpad," preserving its context window for long-running tasks.

- 5. Self-Modeling: The agent knows what it is natively capable of (and what it isn't). It knows when to escalate to a human or when to delegate to an expert sub-agent.

Level 4: Real-World Agentic Examples

Let’s look at how these pillars shape real-world behavior, far beyond basic text generation.

1. The Multi-Agent Orchestrator

You give a system the goal: "Write a comprehensive market research report on the EV industry." A non-agentic LLM just spits out a generic 500-word essay. A multi-agent system deploys a "Researcher Agent" to browse the web for live data, a "Writer Agent" to draft the findings, and a "Critic Agent" to review the draft and force the Writer to fix inaccuracies. The final output is heavily refined without a human pressing "retry."

2. Emergent Persona Behaviors

Some applications (like automated news aggregators driven by dozens of unique AI personas) display high agency simply because their behavior feels emergent. They aren’t following strict if/then trees; they are reading content, applying their programmed "biases" and "perspectives," and producing organic, consistent reactions entirely on their own.

Conclusion: Is the Mystique Gone?

It’s true that many current implementations labeled as "Agentic AI" are just simple LLM workflows. The mystique might wear off when you look at the raw Python code and see standard API calls wrapped in while-loops.

However, a truly agentic system isn’t defined by complex syntax; it’s defined by its autonomy. It dynamically generates actions based on evolving goals, self-assessment, and environmental context. It replaces the rigid, hard-coded flowchart with a flexible reasoning engine capable of reacting to the unknown.

Agentic AI is not just a marketing term. It is a fundamental shift from computing that executes to computing that reasons.

Ready to move beyond simple LLM workflows and harness true autonomous logic? Join our Agentic AI Masterclass to learn the art of prompt engineering, multi-agent frameworks, and building highly capable reasoning engines.

Frequently Asked Questions

Is Agentic AI just standard software logic?

While agentic systems use classic programming for structure (like loops and APIs), the defining feature is the LLM acting as a "reasoning engine"—making probabilistic guesses and decisions when variables are unknown or ambiguous.

What differentiates an AI Agent from an LLM workflow?

An LLM workflow executes predefined text generation steps. A true AI Agent possesses autonomy: it sets its own sub-goals, uses tools, evaluates its own success, auto-corrects errors, and manages its own contextual memory.

What is an example of true agentic behavior?

Asking a system to "Design a weekly menu" by giving it access to thousands of recipes, stock invoices, and customer receipt databases. A traditional script would fail, but an agent queries the data, plans a menu based on seasonality and stock, orchestrates sub-agents for specialized tasks, and returns a cohesive result.

Live masterclasses

Enroll in our live masterclasses programs: Build real AI agents or your first data-science model with expert mentors.

Agentic AI Masterclass

Learn agentic AI, AI agents, automation, and certification-focused projects in a live bootcamp.

Duration: 2 days, 5 hours each day.

Agentic AI Masterclass →Data Science Masterclass

Start your data science journey with a structured live masterclass and hands-on model building.

Duration: 2 days, 5 hours each day.

Data Science Masterclass →